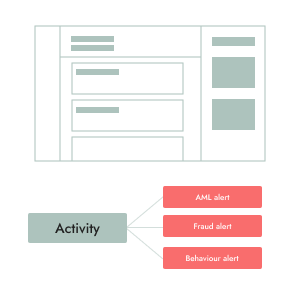

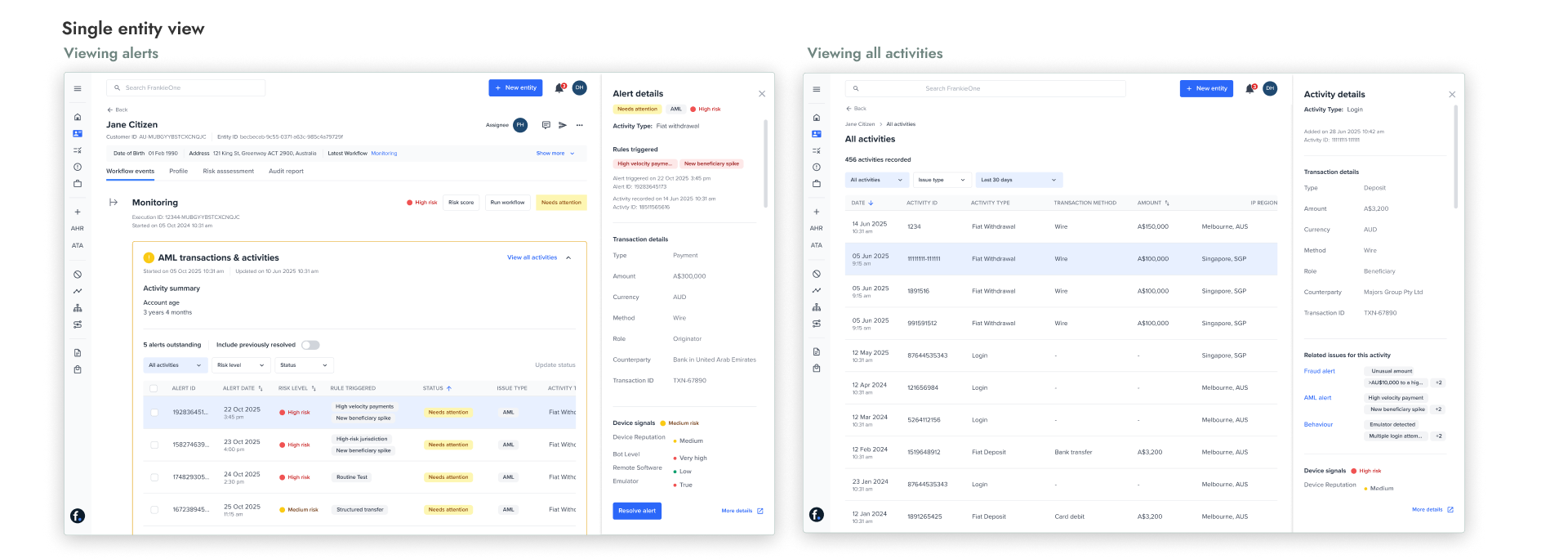

Linking alerts to activities as the core investigative model

An activity can trigger multiple alerts across different rules, but operators ultimately need to assess risk at the activity level.

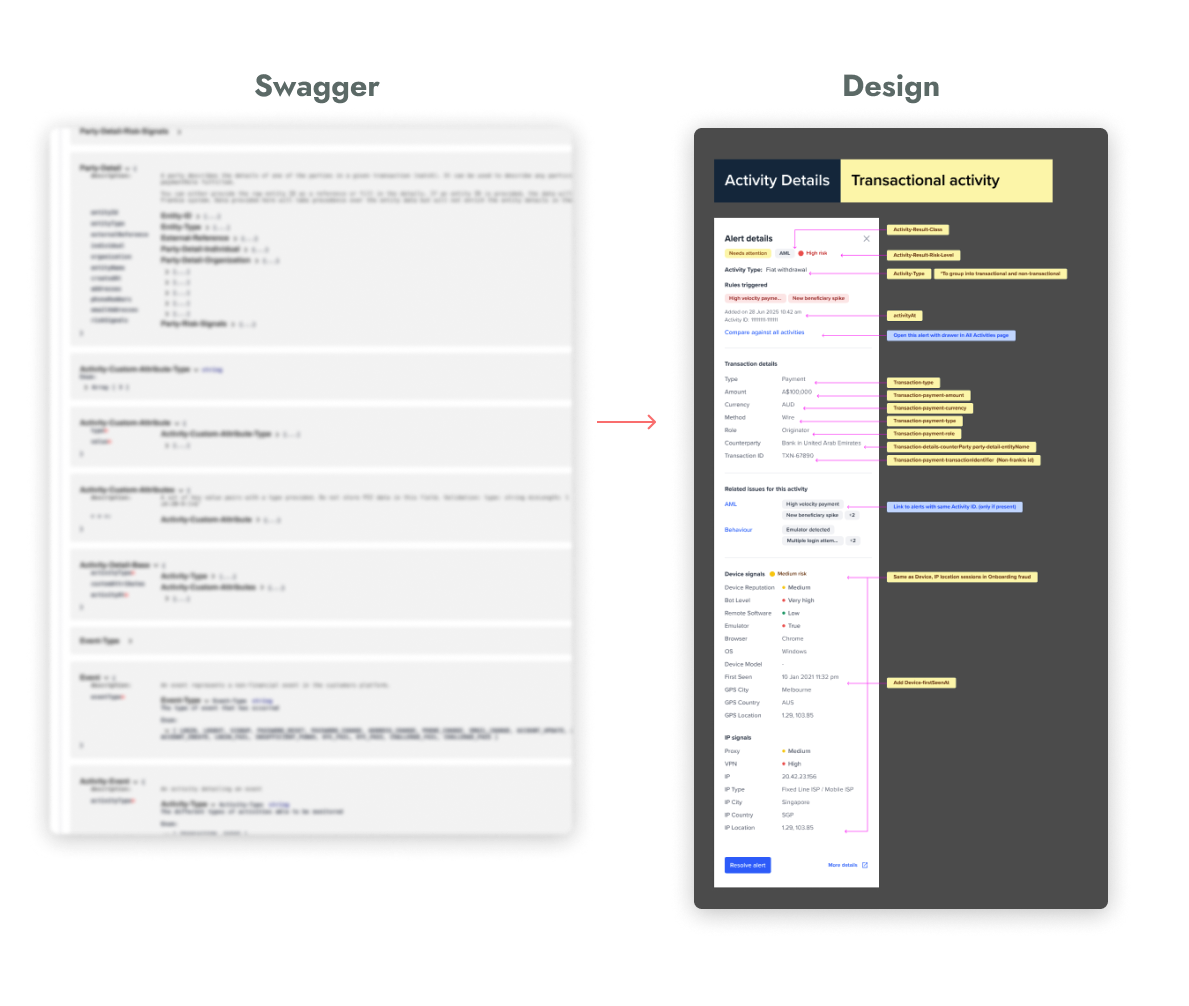

I designed a clear relationship between alerts and activities:

Activities are visibly marked as suspicious based on aggregated alert outcomes, ensuring that risk is not evaluated in isolation.